Anthropic just killed the hack that let you use Claude Pro with your favorite third-party tools.

Starting today, apps like OpenClaw can't tap into your subscription anymore you'll have to pay per token or buy usage bundles instead.

It's a classic case of a company closing the loopholes once they get big enough.

But wait, there's more:

A robotics AI that can fold, pack, and improvise like a human worker

Why creators are fighting for "human-made" labels (and why it's harder than you think)

I'm Alex. Welcome to L8R by Innov8.

Let's dive deep 🐰

In today's post:

• Anthropic kills third-party Claude tools

• Generalist's robot brain does what humans do

• Why "Human-Made" labels are harder than they look

Anthropic Kills the Cheap AI Buffet for Third-Party Agents

You can no longer game the system with your Claude subscription.

Anthropic just blocked third-party tools like OpenClaw from using flat-rate Claude Pro and Max accounts.

If you want to run heavy automation bots, you have to pay by the token now.

The details:

Anthropic officially stopped third-party tools from using subscription tokens on April 4, 2026.

Some users squeezed up to $5,000 worth of server power out of a $200 per month Claude Max plan.

Third-party apps bypass Anthropic's cost-saving Prompt Cache feature, which causes massive server strain.

You can still use official apps like Claude Code on your subscription, but outside tools require a standard API key.

Affected users get a one-time credit, or they can ask for a full refund by April 17, 2026.

Why it matters:

AI companies are bleeding money to keep their servers running.

Flat-rate plans were a great way to get users hooked, but autonomous agents run in endless loops and cost too much.

This move shows the AI industry is finally waking up to the math.

Subsidized server power is dead, and developers will have to face real operational costs.

💡 L8R's Take:

This was bound to happen. You cannot run a business when customers take ten times more than they pay for.

If your startup relies on abusing a $200 subscription plan, you do not have a real business model.

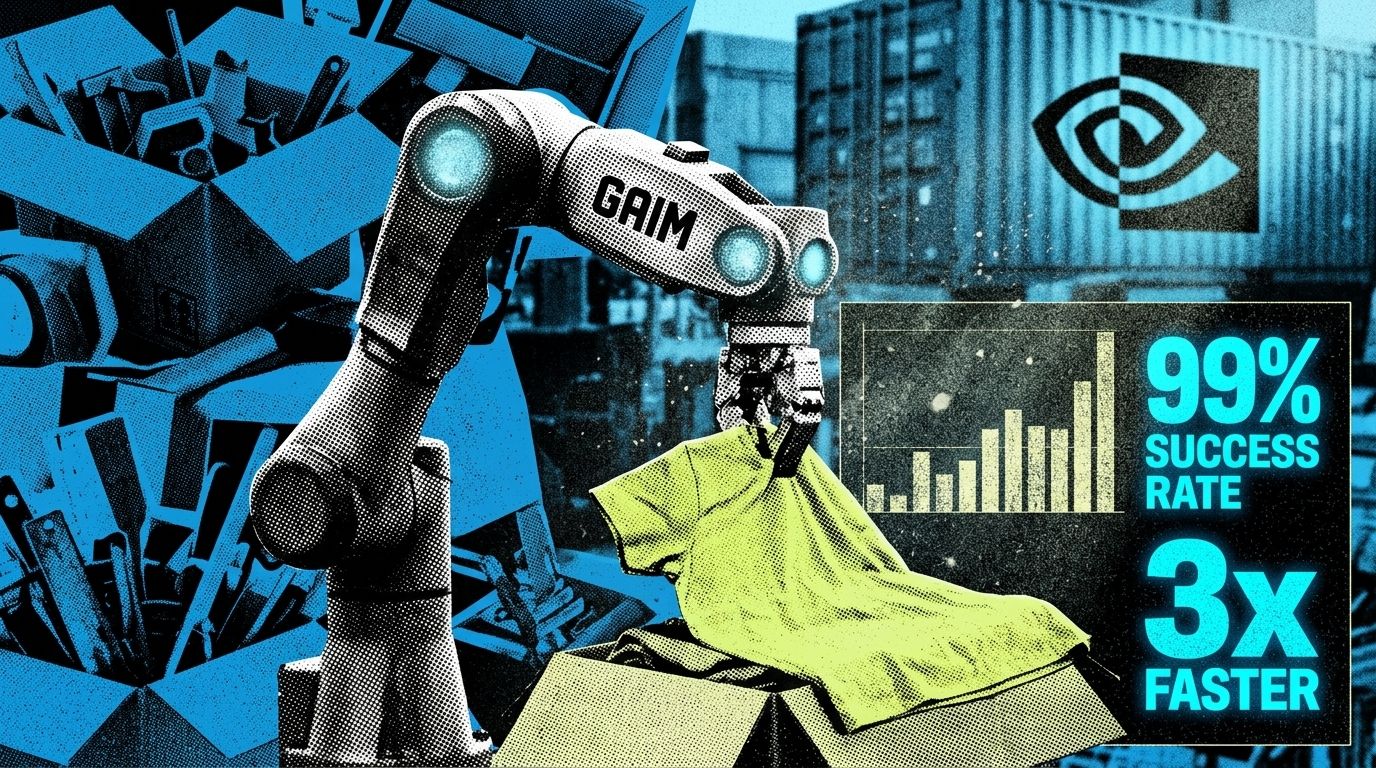

Robots finally get their 'ChatGPT moment' with GEN-1

Generalist AI just dropped GEN-1, a massive new brain for physical robots.

This model lets machines fold clothes, pack boxes, and fix things without breaking a sweat.

It takes robotics out of neat factories and puts them into the messy real world.

The details:

GEN-1 hits a massive 99% success rate on hard physical tasks, jumping way up from the old 64% standard.

It works three times faster than other top systems and only needs about one hour of fresh data to learn a brand new job.

The team trained this beast on over 500,000 hours of real-world physical interaction data.

It actually improvises when things go wrong, like fixing a snagged t-shirt without needing extra code.

Generalist AI launched this on April 2, 2026, backing up their $140 million war chest from giants like Nvidia and Bezos.

Why it matters:

Getting robots to do physical chores is a nightmare because the real world is messy.

Old robots needed perfect, scripted environments to work at all.

GEN-1 fixes this massive data bottleneck. It proves that scaling AI laws works for physical movement just like it did for text.

💡 L8R's Take:

I am completely blown away by that one-hour training time.

If you can teach a robot a new physical skill in 60 minutes, the whole game changes.

Every warehouse and factory will run on these brains before the decade ends.

The Messy Fight for '100% Human' Labels

The internet is drowning in AI content, and real artists are desperately trying to prove they actually made their work.

Right now, everyone is fighting over how to label things as "human-made."

It is a giant mess with zero clear rules.

The details:

At least 12 different groups are pushing their own badges, like "Not by AI" and "Proudly Human."

Big tech companies like Google, Meta, and Adobe back a standard called C2PA, but many creators just ignore it.

Instagram head Adam Mosseri says proving real media is actually easier than catching fake media.

Current AI detection tools fail all the time, making trust-based labels completely unreliable.

Why it matters:

People do not trust what they see online anymore.

Because AI creators make more money by hiding their use of bots, voluntary labels are failing.

Soon, actual human creativity will become a rare luxury item.

You might even need a blockchain token just to prove a real person painted a picture.

💡 L8R's Take:

Stickers and badges will not save creators right now.

If bad actors can easily lie about using AI, a "Proudly Human" badge means absolutely nothing.

We need built-in camera and software proof from the start, or this whole system is a joke.

🚀 Quick L8R Summary

Anthropic blocks third-party tools: Claude Pro and Max subscriptions no longer work with external apps like OpenClaw you now pay per token or buy usage bundles.

Generalist drops GEN-1 robot model: New AI model makes robots handle tricky tasks like folding and packing with 95% success rates and human-level speed.

Creators fight for "human-made" labels: With AI flooding the internet, artists want verification badges to prove their work is real—but there's no standard yet.

📩 Innathe L8R engane undarunnu 👇?

We read every reply - just reply to this email and let us know how we can improve !

Appo adutha L8R il kanaam bie…👋